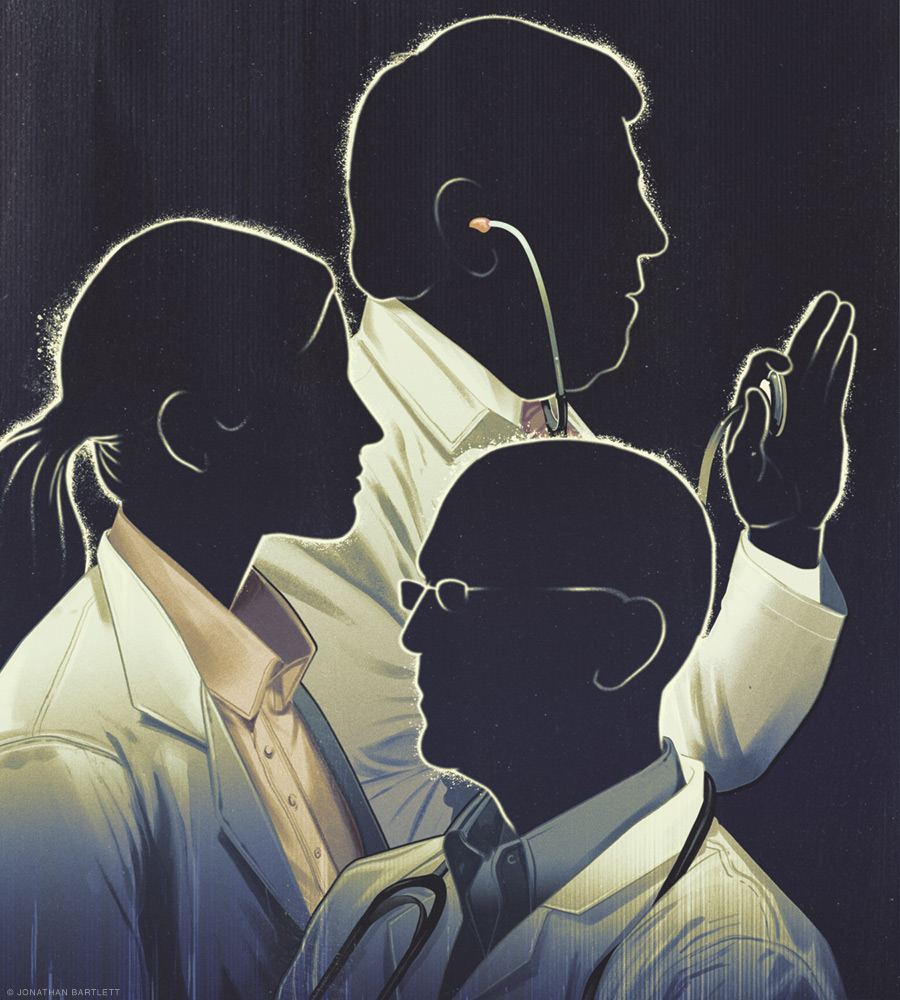

The US must act now to avert a looming shortage of primary care physicians.

By Gregg Coodley

Americans may be divided on many issues, and healthcare policy is certainly no stranger to heated debate, but one preference unites virtually all of us: the ability to have a regular relationship with a primary care physician. More than 90 percent of Americans, according to one recent survey, value having a family doctor they can see for any health issue. Yet regular access to a primary care physician seems likely to become rarer and rarer, for their numbers have been falling for decades and show little hope of reversing that decline. In 1900, the US had an estimated 173 doctors—almost all general practitioners—per 100,000 residents. By 2005 there were only about 46 primary care physicians per 100,000, and in 2015 the number stood at 41.4. Experts expect the ratio to fall an additional 20 to 25 percent by 2030.

The disappearance of the primary care practitioner is more than an issue of nostalgia, for the evidence is overwhelming that primary care is vital, both at a population level and for the individual. A review of 20 years’ worth of public health studies found a positive correlation between the supply of primary care physicians and a wide variety of improved health outcomes, ranging from cancer and heart disease to infant mortality and overall life expectancy. Another study found that adults who had a primary care physician rather than a specialist as their usual source of care had lower subsequent five-year mortality rates.

Continuity of care with a primary care practitioner also has a major impact on health. Studies have shown that patients with longer relationships with their primary care physicians are hospitalized less, incur fewer overall costs, visit emergency rooms more rarely, and report the greatest satisfaction with their care.

Perhaps the greatest testimonial to the importance of primary care lies in a 2016 comparison of the United States to 10 other developed countries in the Journal of the American Medical Association. Where the US spends about 5 percent of healthcare dollars on primary care, these other nations spend between 12 and 17 percent. And even though the US spends more overall on healthcare, it trails those countries on measures ranging from life expectancy to infant mortality. Look closer, and a startling primary care practitioner gap emerges. Roughly half the doctors in Canada are primary care physicians, versus only 30 percent in the US. The United Kingdom boasts approximately 60 primary care doctors per 100,000 people—50 percent more than in the US.

So why, given the clear benefits of primary care, are we facing this massive shortfall of primary care practitioners?

Multiple factors have contributed to this decline, but the overarching dynamic is the abandonment of primary care in favor of medical specialties. In the 1930s, about 85 percent of US physicians were generalists. By the 1960s the proportion had dropped to one half, and specialists have outpaced generalists ever since. By the early 2000s only about 20 to 25 percent of medical school graduates were going into primary care.

Why do generalists make up more than 50 percent of the physician work force in other Western nations and yet are so much rarer in America?

Perhaps the biggest factor is financial. Primary care physicians, on average, are paid less than half what specialists earn. A 2009 study estimated that doctors choosing high-income specialties could expect an additional $3.5 million in income over their careers. And this financial differential has become increasingly hard to ignore amid the rapid increase in medical school costs and student debt. In 2018 the median cost of medical school was $243,902 for public schools and $322,767 for private schools. The average debt in 2017 for medical students was $192,000. Such debt makes it harder to choose a lower-paying career in primary care.

Non-financial factors have also contributed to the shift. Medical students have flocked to specialties with set hours, such as being a hospitalist. Primary care physicians, meanwhile, bear a burden of charting and paperwork that frequently extends into the evenings and weekends. And though doctors of all kinds complain about the ever-increasing load of bureaucratic tasks, evidence shows that this problem is much worse for primary care practitioners than specialists, as insurance companies exert ever more control over routine medical decisions. The autonomy of primary care physicians has also been eroded in the last 15 years by the rapid engulfment of small independent practices by huge corporations and hospital chains—which has emerged as a significant factor in physician unhappiness.

The resulting demoralization of primary care physicians has led to early retirements, shifts to other careers, and further discouragement to students considering primary care. A 2007 survey revealed that two-thirds of primary care practitioners would not choose primary care again if given the choice. A 2012 survey showed that most of them did not recommend it as a field. A 2019 study showed that almost half of internists and family physicians reported burnout—among the highest levels of all specialties.

Rescuing primary care—and the myriad benefits it delivers to individual patients and the healthcare system more broadly—is a complex task. But there are steps we can take that might help.

First is acknowledging that primary care and continuity of care are important. Patients should not have to switch doctors if they switch insurance providers. This step alone would cut costs and improve patient care, since new doctors would not have to continually reinvent the wheel for a churning patient population. And this step would not require a radical systemic overhaul, for insurers could still control the bulk of costs—which occur in the hospital—by mandating to whom primary care practitioners could refer.

Second, it is time to reevaluate the length and cost of medical education. The repetition of basic sciences in high school, college, and medical school carries an opportunity cost. Studies have shown that cutting medical school from four years to three does not cut quality—but does meaningfully reduce student costs and debt. Training really is shorter, with no discernable drop in outcomes, in most other developed nations.

Third, since hospitalists have now usurped the role of primary care physicians in hospital settings, primary care practitioners don’t really need three years of training that traditionally focuses over 90 percent of hours on inpatient care. Primary care residencies could be cut to two years—the second entirely in outpatient care—giving graduates far more primary care training and exposure at a reduced cost.

Finally, the income differential between specialists and primary care physicians is an artificial construct, based on a historical undervaluing of talking to patients as opposed to performing procedures. The federal government has driven this dynamic by setting reimbursement rates that are aped by private insurers, and the federal government could use the same tool to shift that dynamic. One study found that fully one-quarter of medical students would shift to primary care if there were adjustments in income and hours to reduce the generalist-specialist gap. This is the most straightforward solution, and one that could bring the US supply of primary care physicians in line with other Western nations.

These changes are feasible, would not add to overall healthcare costs, and could be accomplished by fiat by insurers, medical schools, residency programs, and the federal government. It would behoove all parties to act fast across multiple fronts to preserve this fundamental part of the healthcare system. Americans value family doctors for good reason. Sometimes patients really do know best.

Gregg Coodley C’81 is a primary care physician in Oregon and the author of Patients in Peril: The Demise of Primary Care in America (Atmosphere Press, 2022).